The main purpose of this ongoing blog will be to track planetary extreme, or record temperatures related to climate change. Any reports I see of ETs will be listed below the main topic of the day. I’ll refer to extreme or record temperatures as ETs (not extraterrestrials).😜

Main Topic: Physics-Based Models Outperform AI Weather Forecasts of Record-Breaking Extremes

Dear Diary. Over the weekend I noticed this new report in which physics models remain superior to new artificial intelligence versions when it comes to forecasting extreme heat. As you know, that is what this blog is mainly about in association with climate change. I applaud the effort by all teams to improve weather forecasts when it comes to forecasting extreme heat. That can save lives because it will give city officials more lead time to help protect the public by making sure that cooling centers are up to snuff before any heatwaves set in.

Here are more details from Science on the state of the art of forecasting severe heat. The following article is a bit technical, so all has not been reposted. The original can be found here:

Physics-based models outperform AI weather forecasts of record-breaking extremes | Science Advances

Research Article

ATMOSPHERIC SCIENCE

Physics-based models outperform AI weather forecasts of record-breaking extremes

Zhongwei Zhang https://orcid.org/0000-0002-2070-1993, Erich Fischer https://orcid.org/0000-0003-1931-6737, Jakob Zscheischler https://orcid.org/0000-0001-6045-1629, and Sebastian Engelke https://orcid.org/0000-0001-6356-918XAuthors Info & Affiliations

Science Advances

29 Apr 2026

Vol 12, Issue 18

Abstract

Artificial intelligence (AI)–based models are revolutionizing weather forecasting and have surpassed leading numerical weather prediction systems on various benchmark tasks. However, their ability to extrapolate and reliably forecast unprecedented extreme events remains unclear. Here, we show that for record-breaking weather extremes, the physics-based numerical model High RESolution forecast (HRES) from the European Centre for Medium-Range Weather Forecasts still consistently outperforms state-of-the-art AI models GraphCast, GraphCast operational, Pangu-Weather, Pangu-Weather operational, and Fuxi. We demonstrate that forecast errors in AI models are consistently larger for record-breaking heat, cold, and wind than in HRES across nearly all lead times. We further find that the examined AI models tend to underestimate both the frequency and intensity of record-breaking events, and they underpredict hot records and overestimate cold records with growing errors for larger record exceedance. Our findings underscore the current limitations of AI weather models in extrapolating beyond their training domain and in forecasting the potentially most impactful record-breaking weather events that are particularly frequent in a rapidly warming climate. Further rigorous verification and model development is needed before these models can be solely relied upon for high-stakes applications such as early warning systems and disaster management.

INTRODUCTION

Record-breaking weather extremes, such as the 2021 Pacific Northwest, 2010 Russian and 2003 European heatwaves, and winter storms Lothar in 1999 and Kyrill in 2007, have caused numerous fatalities and severe impacts on society, the economy, and ecosystems (1–5). The level of disaster preparedness and adaptation to extreme events is strongly influenced by events observed in recent decades. Consequently, after extended periods without major events, or when events substantially exceed previous record levels, socioeconomic impacts tend to be particularly large.

In addition to long-term disaster preparedness (6), accurate physics-based numerical weather prediction (NWP) is critical for early-warning systems to save lives and reduce the impacts of climate extremes (7). Recently, a new generation of artificial intelligence (AI) weather models has reached and sometimes exceeded forecast skills of state-of-the-art physics-based NWP systems (8–11). These models offer considerable advantages in speed and energy efficiency, raising important questions about their potential to supplement or eventually replace traditional physics-based NWP systems (12).

Before warnings for population and critical infrastructure are routinely based on AI models, their performance needs to be further evaluated. In particular, their reliability in forecasting extreme events remains less well understood. Such events are, by definition, rare and contribute little to aggregated overall skill metrics (13). Nevertheless, recent studies suggest that AI models perform well—and in some cases even better than numerical models—in forecasting extreme weather events (14, 15), particularly for longer lead times (8, 9).

Current forecast evaluation approaches for extreme events typically focus on extreme events exceeding a certain threshold for one or several given variables, such as extreme wind speeds (15), tropical cyclones (8, 9, 14), and high and low temperatures (9, 14–16). However, due to small sample sizes, the thresholds are often set to, say, the 95th percentile of the test data, thus capturing mostly moderate extremes. Much less is known about record-breaking events, a subset of extreme events that are unprecedented in the observational record. Given the current high rate of global warming, record-breaking events sometimes exceed previous record levels by large margins and have been referred to as black or gray swans (17), or record-shattering extremes (18, 19).

A number of case studies have shown mixed results on the ability of AI weather models to extrapolate beyond the range of their training data. For instance, a seasonal AI forecasting model (20) struggled to predict North Atlantic Oscillation values that extended outside its training distribution (21). While AI models appear to outperform traditional physics-based NWP models on tracking tropical cyclones (8, 9, 22), they tend to underpredict the intensity of the most extreme storms, as measured by mean sea-level pressure (17, 23, 24). Similar limitations in reaching unprecedented amplitudes have also been observed in other high-impact events such as heatwaves, winter storms, or compound extremes (25). On the other hand, the unprecedented 2024 rainfall in Dubai was well predicted by GraphCast, suggesting that generalization to new events may be possible if they share dynamical similarity with past extremes from other regions (26).

However, these insights primarily rely on isolated case studies of specific events, whose conclusions are inherently difficult to generalize due to the unique features of the analyzed events and models. To systematically evaluate extrapolation in state-of-the-art AI weather models, we construct a benchmark dataset consisting of record-breaking events for heat, cold, and wind extremes. This dataset includes all observations during the test years 2018 and 2020 that exceed the respective historical records from the training data of all considered AI models. The records are defined per variable, per grid cell, and per calendar month by using the ERA5 reanalysis data (27) from 1979–2017 with daily observations at 00, 06, 12, and 18 UTC time, yielding a large sample size of record-breaking events even in individual test years (see Materials and Methods). For the year 2020, this yields 162,751 heat, 32,991 cold, and 53,345 wind records, which are spread across different seasons and climatic zones from tropics to high latitudes (Fig. 1, A and B, and fig. S1, A to D). The dataset includes many prominent record-breaking events, such as the Siberian heatwave in early 2020 (28) and the U.S. heatwave of August 2020 (29). Evaluating AI models on this record dataset challenges them to forecast on out-of-distribution data, which is known to be difficult for neural networks in the machine learning literature.

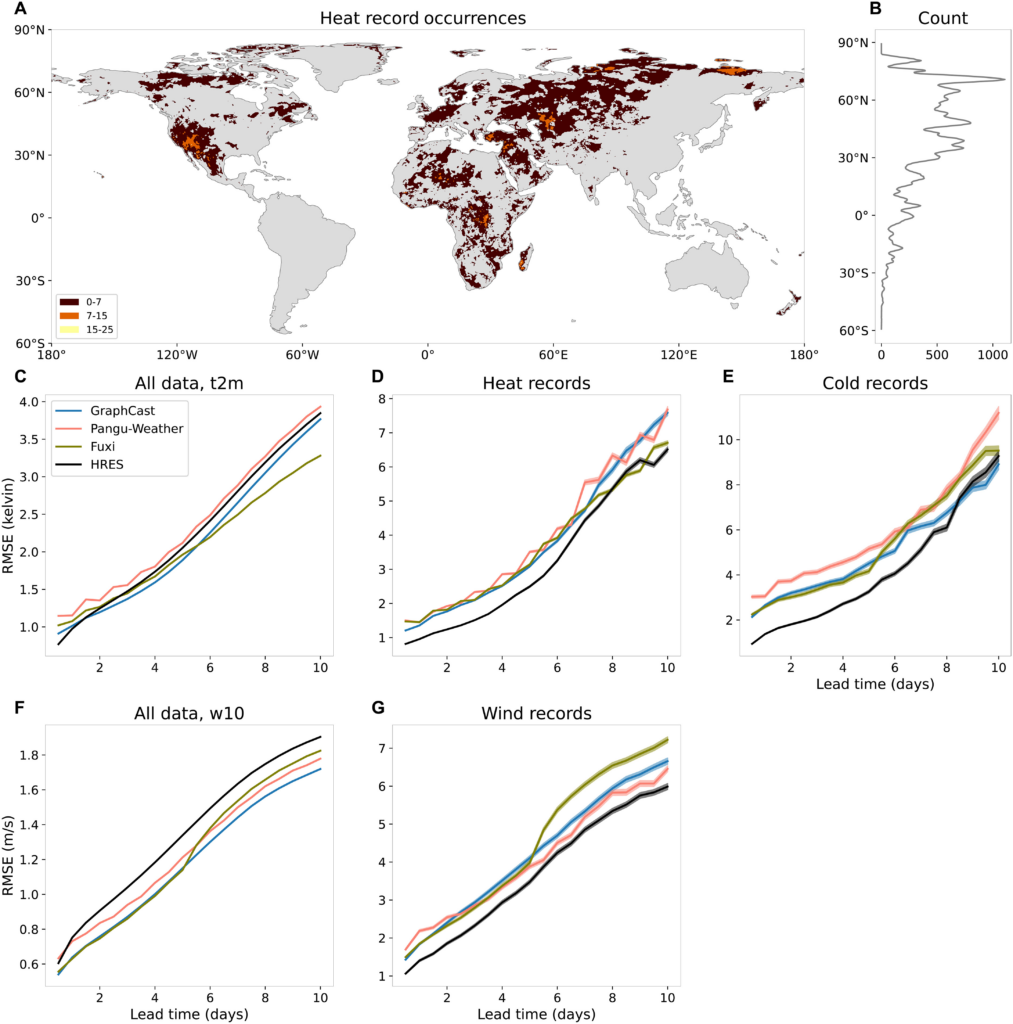

Fig. 1. Model performance on all events and record-breaking events.

(A) Number of heat records in 2020 in ERA5. (B) Number of heat records per latitude. (C to G) Root mean square error (RMSE) of forecasted 2-m temperature and 10-m wind speed over land (excluding the Antarctic region) of HRES, Pangu-Weather, GraphCast, and Fuxi for all data [(C) and (F)] and only record-breaking events [(D), (E), and (G)] in 2020 for different lead times. The transparent shaded areas indicate 95% confidence bands.

We assess the extrapolation performance on our benchmark dataset of record-breaking events of three leading deterministic AI weather models: GraphCast (9), Pangu-Weather (8), and Fuxi (30), as well as the operational variants of GraphCast and Pangu-Weather. Their performance is compared to the physics-based model High RESolution forecast (HRES), which is the deterministic high-resolution configuration of the operational Integrated Forecasting System of the European Centre for Medium-Range Weather Forecasts (ECMWF) and is widely considered as the leading physics-based NWP model.

RESULTS

Model comparison on records’ intensity

Consistent with previous studies (8, 9, 30, 31), we find that, on overall performance, all AI models—except Pangu-Weather—outperform the physics-based ECMWF model HRES in forecasting 2-m temperature across most lead times (Fig. 1C). Forecast accuracy is quantified using root mean square errors (RMSEs), computed over all 00 and 12 UTC time steps in test year 2020 and over all land grid points (excluding the Antarctic region; see Materials and Methods). For 10-m wind speed, all AI models consistently outperform HRES across nearly all lead times (Fig. 1F).

However, the predictive skill is drastically different for record-breaking temperature and wind events in 2020. Restricting the RMSE to record-breaking events, the physics-based HRES model consistently outperforms all AI models for hot and cold temperature records as well as wind speed records across almost all lead times (Fig. 1, D, E, and G). The performance gap is most pronounced for short lead times. For lead times beyond 5 days, HRES still generally performs better but to a lesser extent. This aligns with previous findings that AI models tend to perform relatively better at longer lead times (9).

Because of limited data availability [GraphCast forecasts are only available for 2018 and 2020 on WeatherBench 2 (31), and Fuxi forecasts are only available for 2020], the evaluation is shown for a single year only as in most previous studies (8, 15). We observe the same pattern in 2018 (fig. S2). The years 2018 and 2020 are distinctly different in terms of El Niño–Southern Oscillation (ENSO) conditions, with 2018 transitioning from La Niña to El Niño and 2020 undergoing a strong El Niño to La Niña shift. Since ENSO strongly influences the occurrence of temperature records (32), particularly in the tropics, the consistent outperformance of HRES across both years shows the robustness of the results. The better skill of HRES in predicting record-breaking events is further consistent across different seasons and a wide range of different climate zones, including tropics, subtropics, mid-latitudes, and northern high latitudes (Fig. 1A and figs. S3 and S4), although there are few or no record-breaking events in South America, Southeast Asia, maritime continent, or Australia. To remove the temporal dependence in our test records data, we further evaluated the forecasts of record-breaking events that have the largest exceedances per month at each grid point. Again, HRES consistently outperforms the three AI models for almost all lead times (fig. S5).

While it is common to evaluate ERA5-trained AI models against ERA5 reanalysis, and HRES against its own analysis at lead time 0 (HRES-fc0) (8, 9, 30) (see Materials and Methods), this approach can complicate comparisons due to different horizontal resolution: ERA5 has a resolution of 0.25°, whereas HRES operates at 0.1°. To assess the sensitivity of our findings to the choice of different reference datasets, we also evaluate operational versions of GraphCast and Pangu-Weather against HRES on a common test dataset of record-breaking events identified using HRES-fc0 as observational ground truth. Also in this setting, HRES consistently outperforms the AI models on the records (fig. S6).

Following (9), we have focused on lead times that are multiples of 12 hours, which lead to record-breaking events at 00/12 UTC [as all AI forecasts are only available for initializations at 00/12 UTC on WeatherBench 2 (31)]. When considering lead times at 6 hours, 18 hours, etc., more record-breaking events appear in regions such as South America, Southeast Asia, and Australia (figs. S7A and S8A). As shown in previous studies (8, 30), in this case, the ranking of competing model forecasts remains the same and HRES consistently outperforms the AI models (figs. S7, C to G, and S8, C to G).

Selecting a subset of extreme events based on observations can favor models that produce too many extreme forecasts—a problem known as the forecaster’s dilemma (33) (see Materials and Methods for discussion). Thus, we construct an alternative benchmark avoiding the forecaster’s dilemma, based on events where the forecast itself, rather than the observation, exceeds the training record (34). Results from this forecast-conditioned evaluation (fig. S9) are consistent with the previous conclusion that HRES outperforms current AI models in forecasting records.

AI models underestimate intensities of records

While we demonstrate that AI models underperform compared to HRES in forecasting record-breaking events, their errors may arise from over- or underprediction of event intensity. When considering all data of the test year 2020, all models have relatively small biases (GraphCast slightly underestimates 2-m temperature, while Fuxi overestimates 2-m temperature for lead times longer than 7 days; HRES and Pangu-Weather also underestimate 2-m temperature for long lead times, but with a smaller bias than GraphCast; all models slightly underestimate 10-m wind speeds) (fig. S10). To better understand model behavior beyond their training domain, we compare forecast accuracy and bias against the record exceedance, that is, the margin by which a record is exceeded. We find that AI models generally underpredict temperature during high records and overpredict during low records. This pattern is shown for GraphCast and heat records (Fig. 2A). The systematic underprediction is remarkably consistent across regions, seasons, and location in tropics, subtropics, and mid- to high-latitudes, despite the fact that the physical drivers of heat records vary substantially across regions. This behavior is not limited to a single model: Other AI models show similar patterns of intensity underestimation, while HRES demonstrates a more balanced distribution of over- and underpredictions (fig. S11). These results strongly suggest that AI model forecast errors are at least partly due to systematic extrapolation limitations.

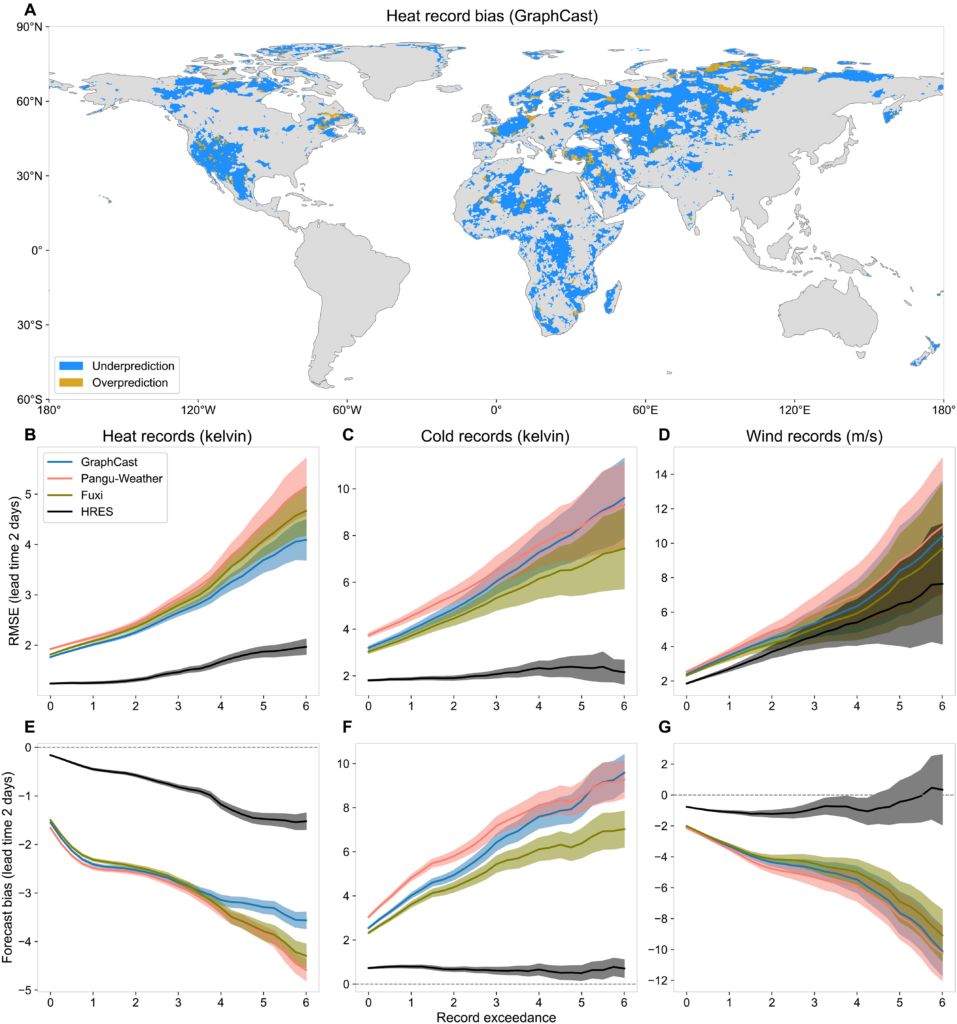

Fig. 2. Forecast bias against record exceedance.

(A) Forecast bias of lead time 2 days of the maximum heat records (GraphCast). (B to D) RMSE of 2-m temperature for heat and cold records, and 10-m wind speed for wind records for events in 2020 that exceed the record by at least a certain margin (x axis). Only land pixels (excluding the Antarctic region) are considered. (E to G) Forecast bias of heat, cold, and wind records, for events that exceed the record by at least a certain margin. The transparent shaded areas indicate 95% confidence bands.

For all record types, the errors of the three AI models seem to grow almost linearly with respect to the degree of record exceedance (Fig. 2, B to D, for a lead time of 2 days; additional lead times in fig. S12). This trend indicates that forecast bias is the primary driver of error (Fig. 2, E to G, and fig. S10): The greater the record exceedance, the larger the underestimation of event intensity. The models behave as if their predictions have an implicit (soft) cap at a certain local value. In contrast, the physics-based HRES model is more robust to extreme record exceedances. For cold records, HRES exhibits a nearly constant error across increasing exceedances. For heat and wind records, it shows a mild tendency of underestimation, though far less so than AI models. Overall, HRES exhibits lower forecast bias for all record types, and bias is not the dominant source of error, particularly for cold and wind records.

This behavior, shown here for the evaluation year 2020, is fully consistent with results from both the operational forecasts in 2020 and non-operational forecasts in 2018 (figs. S13 and S14). The systematic, one-sided bias observed across event types, lead times, regions, and independent years provides strong evidence that current AI models have a structural extrapolation problem when forecasting record-breaking events.

Model comparison on records’ occurrence

We further test the ability of AI models to predict not only the intensity but also the frequency of record-breaking events. We find that, in addition to underestimating event intensity, AI models systematically underpredict the number of records relative to their ERA5 ground truth (Fig. 3, A to C). This underestimation results in a low number of true positives and a high number of false negatives, and consequently low recall (defined as the ratio of true positives to the observed positives) (fig. S15, A to C). In contrast, HRES forecasts a number of records comparable to its HRES-fc0 ground truth, with a slight overestimation for heat records at smaller lead times.

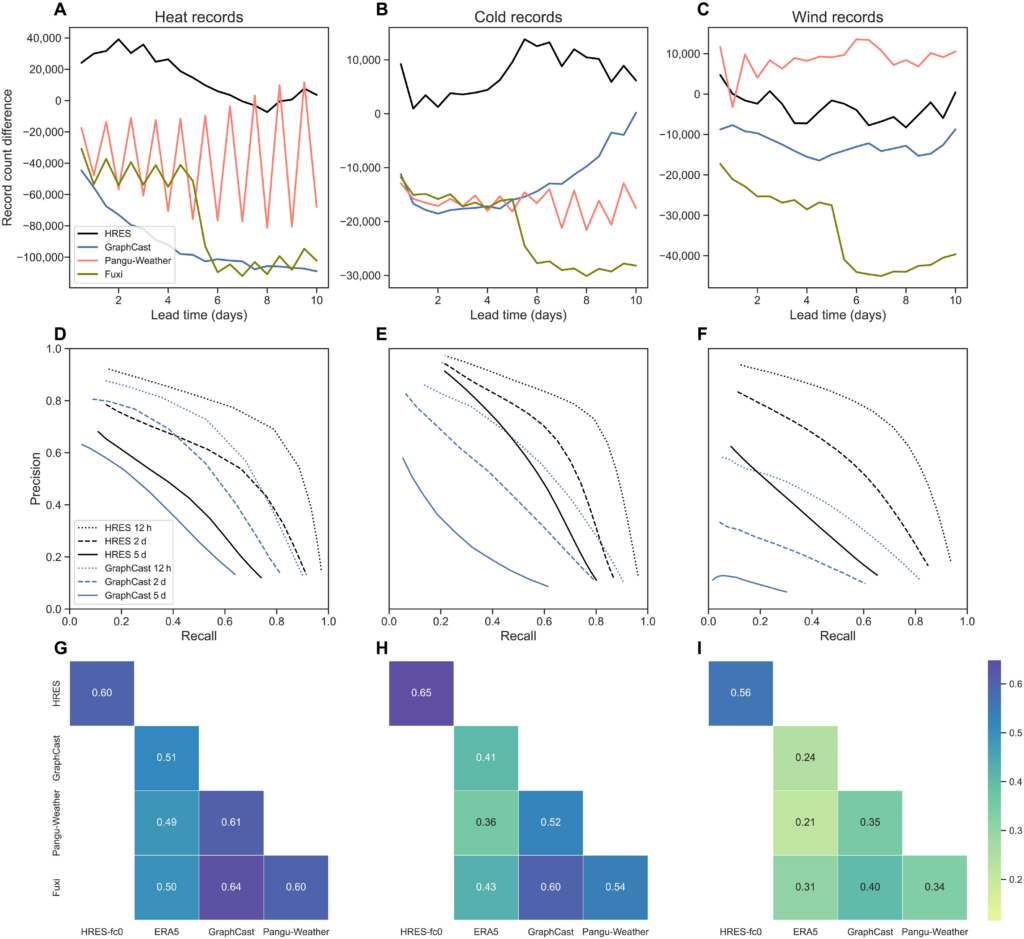

Fig. 3. Prediction of occurrence of record-breaking events.

(A to C) Difference between the number of heat, cold, and wind records in the forecasts and in their ground truth data. Only land pixels (excluding the Antarctic region) are considered. (D to F) Precision and recall curves of GraphCast and HRES forecasts when the records are used as the threshold for different lead times. (G to I) Correlations between the indicator functions of whether the ground truth or 2-day forecasts exceed the record. Pangu-Weather uses two different models (6 and 24 hours) for different lead times, resulting in the zigzag pattern of its record counts.

Correctly predicting the number of record-breaking events does not imply accurate timing. In risk management, the trade-off between false positives and false negatives is typically evaluated using precision-recall curves, where high precision corresponds to low numbers of false positives and high recall corresponds to low numbers of false negatives, and the curve is obtained by comparing the forecast to different levels of thresholds (see Materials and Methods). Across all record types and lead times, HRES’s precision-recall curves are consistently better than GraphCast’s, in the sense that they have higher precisions for the same recall (or higher recalls for the same precision), indicating superior classification performance for heat, cold, and wind records (Fig. 3, D to F). This is in contrast with earlier results that demonstrate that GraphCast outperforms physics-based models for more moderate extreme events (9). Similar results are observed for Pangu-Weather and Fuxi, where HRES again shows a better classification skill across all lead times (figs. S16 and S17).

As an additional evaluation, we convert both forecast and ground truth into binary variables (1 if a record is exceeded and 0 otherwise) and compute the correlation between them (see Materials and Methods). This metric complements the precision-recall analysis by incorporating true negatives and measuring the degree of dependence between different models’ forecasts. HRES has a higher correlation with its ground truth HRES-fc0 than the AI models with their ground truth ERA5, reaffirming its superior performance in forecasting record-breaking events (Fig. 3, G to I). All AI models are positively correlated with each other, showing that they tend to make errors on the same events. This may be due to shared biases learned from their common training data.

DISCUSSION

Our findings consistently show that current AI models underperform HRES in forecasting record-breaking events. They tend to underpredict the intensity and frequency of heat, cold, and wind speed records, with greater forecast biases the larger the record margin. This strongly suggests a systematic extrapolation problem in these models.

Although our evaluation study is restricted to 2018 and 2020, our results are likely to hold in more recent years, as AI models tend to perform worse when the test year is further from the training years (9). Because of substantial regional biases and high resolution dependence in the ERA5 precipitation data (35), here we followed (9) and excluded precipitation in the evaluation. While in this work we evaluated AI weather forecasts against their respective ground truth data ERA5 or HRES-fc0, it would be interesting to evaluate against other data such as in situ observations, to assess their robustness. Since our records are defined locally per grid cell, one extreme event might be counted multiple times across neighboring locations during evaluation. Therefore, it would be worthwhile to investigate methods that ensure spatial independence among the evaluated events. We leave these open questions for future research.

All current state-of-the-art AI weather models are built on neural network architectures such as transformers (8, 30) or graph neural networks (9, 10, 36). In machine learning, extrapolation, also referred to as out-of-distribution generalization, is a well-known fundamental challenge in these models. It has been observed in a range of applications, including image classification (37), protein fitness prediction (38), and large language models (39). Our record benchmark dataset is explicitly designed to test this out-of-distribution problem within AI weather models (see Materials and Methods for discussion).

The AI models studied here do not use any knowledge of physical principles and do not explicitly enforce energy balances or other physical constraints (40, 41). They are purely data-driven and essentially interpolate between observed historical weather patterns in the training period 1979–2017 to produce forecasts for new initial conditions in the test period. This is in stark contrast to physics-based numerical models like HRES that strongly rely on partial differential equations describing the evolution of the atmosphere based on our understanding of physics. This fundamental difference in modeling philosophy likely explains the discrepancy in performance between AI and physics-based NWP models for record-breaking events (Fig. 1, C to G). While AI models excel when the test set closely resembles the training distribution, capturing complex atmospheric patterns and improving skill on average conditions, they struggle when forecasting unprecedented events outside the training domain, even at short lead times. The nearly linear increase of the biases with record exceedance (Fig. 2, E to G) suggests an implicit cap in AI forecasts around the most extreme training observation. Physics-based models do not have such a bound since physical principles allow them to extrapolate, and, consequently, they exhibit less bias across record magnitudes.

We have focused on deterministic AI weather forecasting models, which issue a point forecast for the mean of future weather states. To account for the uncertainty associated with the point forecast arising from the initialization and the model, a number of probabilistic AI weather models have been developed recently (36, 42, 43). Deterministic AI models are often trained by minimizing the RMSE loss function and are designed to predict the mean of the distribution. Thus, they tend to smooth out fine-scale spatial features such as sharp wind peaks. By contrast, probabilistic AI weather models are trained by minimizing proper scoring rules (42, 43), aiming to forecast the whole distribution and avoid such smoothing. However, both deterministic and probabilistic AI models are trained on the same historical ERA5 reanalysis data, meaning that even these probabilistic models likely face similar extrapolation challenges when forecasting out-of-distribution, record-breaking events.

Several promising avenues exist to address this shortcoming in future generations of AI weather models. One strategy is data augmentation, a widely used technique in machine learning to improve robustness to unseen scenarios by enriching the training data (44). In weather and climate modeling, a key advantage is that numerical climate models can produce very large amounts of physically plausible extreme events outside the training domain. Augmenting training with simulations from different climate regimes (11) or record-breaking events from ensemble boosting (19) could allow AI models to learn from more extreme events than in the original training data. This approach has already shown promise: FourCastNet’s (22) performance on tropical cyclones improves substantially when trained on datasets that include such events (17). Another promising direction involves hybrid models and physics-informed neural networks, where specific parameterizations in physical climate models are replaced with AI components (45) or neural networks are trained while respecting specific physical laws described by nonlinear partial differential equations (46). These models combine the efficiency and learning capacity of AI models with the physical consistency and extrapolation ability of physical models. Finally, to improve extrapolation performance on extremes, it may be possible to adapt principles from statistical learning and extreme value theory (47–49).

Given the remarkably fast evolution of AI models in recent years, there are promising ways to further improve these models even for forecasting record-breaking extremes that will continue to frequently occur in a rapidly warming climate. Nevertheless, the current generation still underperforms HRES exactly during the potentially most impactful weather events, including record-breaking heat and cold events as well as wind storms. Thus, it remains vital to fund and run physics-based NWP and AI weather models in parallel and to rigorously evaluate their performance for the most impactful type of weather events.

MATERIALS AND METHODS

Models and data

For the definition of records, we use the ECMWF’s ERA5 reanalysis data (27) from 1979–2017 with daily observations at 00, 06, 12, and 18 UTC times. This dataset coincides with the training data of almost all AI models considered in this paper. The time points in this training data are denoted by Tsubtrain . The ERA5 data are available on a 0.25° by 0.25° latitude-longitude grid. Throughout the paper, we only consider data over land. We use the land-sea mask from the ERA5 and follow ECMWF (50) by defining a grid cell as land if more than 50% of the cell is covered by land; otherwise, it is considered as sea. We exclude the Antarctic region (grid cells with latitude in the range (−60°, −90°]) due to aberrant behavior exhibited by some AI models in this region, and denote the remaining set of land grid cells (244,450 grid cells in total) from the ERA5 dataset by Gsub0.25°.

We use forecasts from the state-of-the-art AI models GraphCast (9), Pangu-Weather (8), and Fuxi (30) from a test period Tsubtest , which is either of the year 2018 or 2020 in our analyses. For the same period, we use forecasts from the physics-based HRES model of ECMWF for comparison. All the forecast data are publicly available from WeatherBench 2 (31). Pangu-Weather and Fuxi are trained and validated on ERA5 data from 1979–2017; the GraphCast forecast data for years 2018 and 2020 are produced by two slightly different versions of GraphCast, i.e., the 2018 data are generated by the GraphCast model trained on ERA5 data from 1979–2017, while the 2020 data are generated by the GraphCast model trained with ERA5 data from a slightly extended period 1979–2019. In addition, we also use the operational versions of GraphCast and Pangu-Weather. The former has been fine-tuned on the HRES-fc0 data from 2016–2021, while the latter was used in an operational setting without fine-tuning.

As ground truth for the AI models, we use ERA5 data with locations in in the test period. For HRES and the operational AI models, we use HRES-fc0 as ground truth. Using these two different datasets to evaluate the forecasts against is the standard approach in the literature of AI weather models to avoid unfair comparisons (8, 9, 30).

Much more here: Physics-based models outperform AI weather forecasts of record-breaking extremes | Science Advances

Here are some “ETs” recorded from around the U.S. the last couple of days, their consequences, and some extreme temperature outlooks, as well as any extreme precipitation reports:

Here is More Climate News from Monday:

(As usual, this will be a fluid post in which more information gets added during the day as it crosses my radar, crediting all who have put it on-line. Items will be archived on this site for posterity. In most instances click on the pictures of each tweet to see each article. The most noteworthy items will be listed first.)